Claude Is Not Your Copywriter. It Is Your Reviewer.

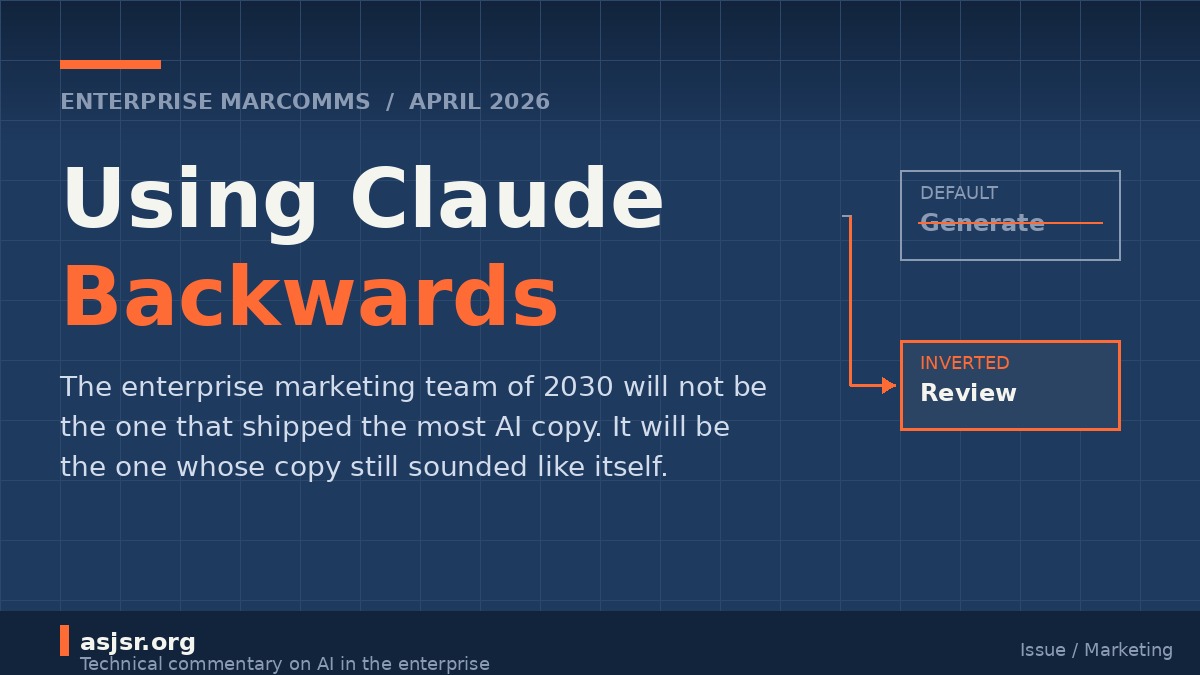

Most enterprise marketing teams are using Claude backwards. They point it at an empty document and ask for a blog post, a launch email, a press release, a product page. Production climbs. Brand voice collapses, because a model trained on the open web will, absent strong constraint, regress to the statistical middle of that same web. The teams extracting real value have inverted the workflow, and their Claude is a reviewer.

The generation default

Walk into a Fortune 500 marcomms team in 2026 and you will find Claude slotted into the seat the junior copywriter used to occupy. First drafts. Campaign variants. Email sequences. Product page frameworks. The business case is easy to build. Content velocity looks great on a dashboard, and Claude now holds 29% of the enterprise AI assistant market, with digital marketing cited as one of its top workloads.

Here is the problem. Industry analysis from March 2026 found that 74.2% of new web pages now contain detectable AI-generated content. When every team pulls from the same foundation models with the same generic prompts, output converges. Polish goes up. Distinctiveness goes down. In controlled comparisons, human-written content receives 5.44x more traffic than AI-generated equivalents, because readers have grown fluent in spotting the averaged voice.

Most teams respond by documenting brand voice harder. Longer style guides. More detailed prompt templates. Bigger Brand Kit uploads. It rarely works at scale, because the style guide was never the binding constraint. The binding constraint was judgment.

What Claude is actually good at

Large language models are extraordinary pattern recognizers and indifferent originators. Ask one to produce a fresh take on a crowded topic and you get consensus. Ask it to evaluate three drafts of that same topic against eighteen brand voice criteria and it will catch things a tired human reviewer misses on draft number seven of the day.

This asymmetry is the whole game:

- Generation is unbounded. The model chooses from an astronomical output space with no external reference point, so it drifts toward the mean of what worked during training.

- Evaluation is bounded. The model receives a finite artifact and a finite rubric, and renders a judgment. The search space collapses.

Evaluation is where Claude runs laps around the humans in a marcomms pipeline. Not because it has taste. Because it is tireless, consistent, and cheap.

The inverted workflow

Flip the pipeline and the team looks different.

Humans write the first draft. Maybe they start with Claude as a thinking partner. Maybe they do not. The draft reflects a specific point of view from a specific person who has met the customer, sat through the QBR, and read the product spec.

Then Claude reviews. Not with a vague "make this better" prompt, but against a concrete evaluation rubric encoded as a system prompt or a Claude Project. Something like:

- Does the piece open with a declarative sentence under fifteen words?

- Does it contain any of the forbidden phrases on the brand's no-fly list?

- Does it assert a point of view, or does it hedge?

- Does it reference a specific customer, number, or mechanism, or is it abstract?

- Does the final paragraph echo the opening thesis?

- Is the reading level consistent with the channel target?

The rubric is the brand. Codified, testable, and re-runnable across every piece of copy the company ships.

The style guide becomes an eval

Traditional brand voice documentation is a PDF nobody opens. The inverted workflow turns it into something executable. Each criterion becomes a line in a prompt. Each prompt becomes a test that runs against every draft before it leaves the building. Over time the eval suite gets sharper, because the team feeds it examples of copy that shipped and underperformed, copy that shipped and won, and copy that got killed in review.

This is the same move engineering teams made a decade ago when they turned tribal debugging knowledge into automated test suites. The artifact is different. The discipline is identical.

A few things change once the team adopts this stance:

- The style guide stops being prose and becomes structured criteria with worked examples and counterexamples.

- Creative reviews move faster because Claude pre-filters the obvious misses before humans read a word.

- Junior writers learn brand voice faster, because they get specific feedback on every draft instead of a scattershot edit from a senior once a week.

- The CMO gets a dashboard showing which criteria copy fails most often, which channels drift, and which writers land closest to the brand signal.

None of this requires exotic tooling. A Claude Project holding the brand rubric, a handful of scored examples, and a consistent review prompt will carry most teams further than a Jasper seat license ever did.

Why governance is the enterprise opportunity

The homogenization research keeps arriving at the same business finding. Brand consistency can move revenue 10 to 33 percent. Most companies have documented brand guidelines. Only about 30% actively apply them. The gap between having a brand and enforcing it is the gap where Claude earns its keep in the enterprise.

This matters more in regulated industries. Financial services, healthcare, and pharma marcomms teams already route every piece of copy through compliance review. They know what an evaluation-centric workflow looks like, because they have been running one with humans for decades. They are also the teams most likely to get value from Claude first, because the shape of the work already fits.

For unregulated industries, the opportunity is to build the same muscle before the homogenization problem eats the brand. The copy that shipped last quarter is now training data that competitors are using to generate their copy this quarter. A brand voice not protected by an evaluator becomes fuel for the averaging engine.

What to build this quarter

Teams that want to pilot the inversion in the next ninety days tend to do five things:

- Audit the last fifty pieces of shipped copy and extract the rules that separate the strong from the mediocre. Write these as concrete, testable criteria.

- Build a Claude Project that holds the criteria, six gold-standard examples, and six anti-examples.

- Wire a review step into the content workflow. Every draft gets scored before a human editor touches it.

- Track which criteria fail most often, and use that signal to tighten briefs at the top of the funnel.

- Resist every request to let Claude also write the piece. The value is in the separation of roles.

The last point is the hardest. Someone on the team will always argue that Claude can do both, and from a cost standpoint they are right. The reason to hold the line is compounding. If the same model writes and reviews, the reviewer develops a blind spot for exactly the failures the writer is prone to. Diversity in the pipeline is what keeps the eval honest, which is why Microsoft's own new Critique feature has one model generate and another review, rather than collapsing both roles into a single assistant.

The last word

The enterprise marcomms team of 2030 will not be the one that shipped the most AI-generated copy. It will be the one that shipped the most copy that sounded like itself. That outcome belongs to teams who figure out early that their scarce resource was never drafting. It was judgment. And the best use of Claude in a marketing organization is not to replace the writer. It is to arm the reviewer.