The Agent Harness Is the Operating System You Forgot to Build

Your AI agent isn't failing because of bad prompts. Three years of obsessing over system messages, temperature settings, and fine-tuning have produced a generation of engineers who know exactly what to say to a model but have no idea how to keep one alive in production. The bottleneck in agentic AI is not intelligence. The execution environment is the engineering problem nobody solved.

The Harness Is Not a Wrapper

Ask most engineers what a "harness" is and they'll say it's the scaffolding around a model. Convenient tools. A retry loop. Maybe some logging. That definition misses the point entirely.

A harness is an operating system for agent cognition. It governs what the agent perceives, when it wakes, which tools it can reach, how it recovers from failure, and what constitutes "done." Strip the harness from any production agent and what remains is not a broken agent. What remains is nothing useful at all.

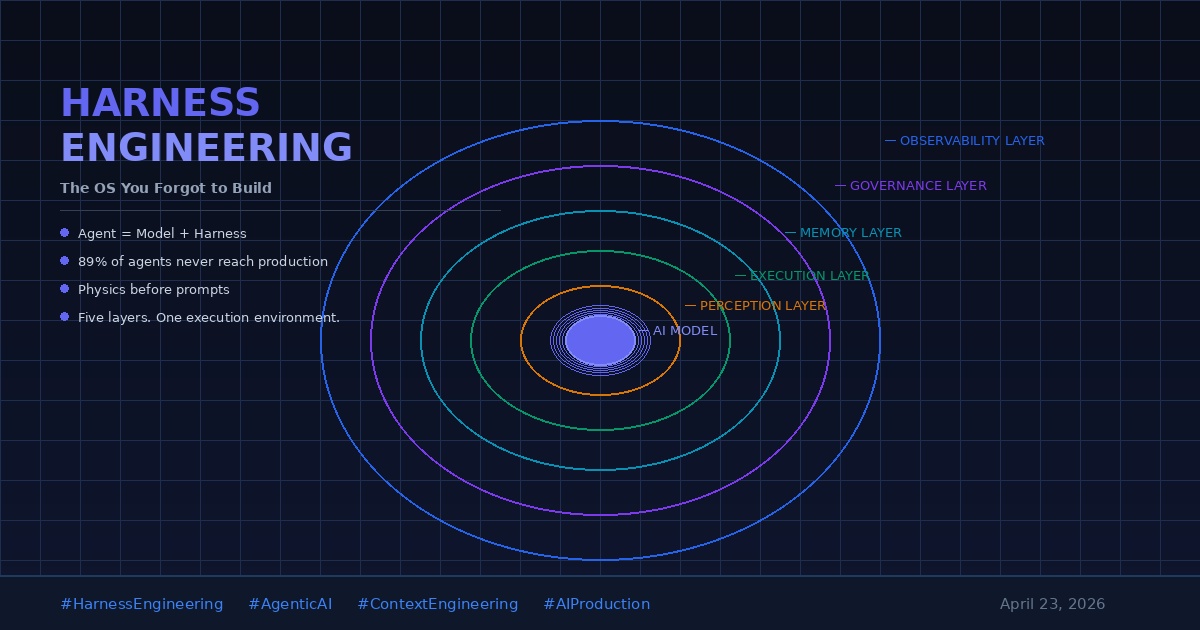

The shift in framing matters:

- Prompt engineering defines what you say to a model

- Context engineering defines what the model knows when you say it

- Harness engineering defines the physics of the agent's existence

Physics is the right word. An agent cannot reason about time if its harness has no concept of a clock. It cannot coordinate with other agents if its harness has no communication bus. It cannot recover from mistakes if its harness has no memory of what happened. The model's intelligence operates inside the laws of the harness.

Why 89% of Agent Projects Die Before Production

The statistic is brutal: 89% of AI agent initiatives never reach production. Analysts blame governance gaps, hallucination rates, cost overruns. These are symptoms.

The actual cause is harness debt. Teams ship models with harnesses built in afternoons. They discover six months later that the agent cannot:

- Resume interrupted work after a crash

- Pause when it detects an anomaly

- Report meaningful state to a human reviewer

- Hand off a task to a different agent without losing context

- Stay within a budget constraint without polling a ledger it doesn't have access to

None of these failures belong to the model. Every single one belongs to the harness.

The Five Layers of a Production-Grade Harness

Harness engineering is not a single problem. It is five distinct problems that most teams treat as one vague bucket labeled "infrastructure."

1. The Perception Layer

What the agent can observe. This includes tool definitions, memory retrieval, real-time data feeds, and the information structure of incoming tasks. A perception layer that returns flat text when the agent needs typed, structured data is a bug that no prompt can fix.

2. The Execution Layer

What the agent can do. Tool definitions are not enough. The harness must enforce capability boundaries, handle tool failures gracefully, and track side effects. An agent that can execute a payment but cannot roll it back operates inside a dangerous harness.

3. The Memory Layer

What the agent remembers across sessions. Short-term context windows and long-term persistent memory serve different functions and require different engineering. Most teams conflate them, then wonder why agents repeat mistakes they documented three heartbeats ago.

4. The Governance Layer

Who approves what, and when. Human-in-the-loop checkpoints, budget caps, escalation paths, and approval workflows belong in the harness, not in the prompt. When governance lives in prompts, it drifts. When it lives in the harness, it enforces.

5. The Observability Layer

What you can see from the outside. Logs, run traces, tool call audit trails, and status transitions are not optional. Without observability, an agent in production is a black box that either succeeds or fails with no diagnostic path between the two outcomes.

The Intent-Harness Gap

Intent engineering has emerged as the discipline of specifying desired behavior with enough precision that an agent executes without guessing. Teams practicing intent engineering document not just "what to do" but "under what conditions, with what constraints, stopping when."

The problem: intent can only be honored if the harness can enforce it.

Consider an agent with a perfectly specified intent: "summarize all customer support tickets flagged critical in the last 48 hours, then escalate any unresolved ones to the on-call engineer." Beautiful specification. Clean intent. Now remove the harness layer that reads ticket flags, checks timestamps, and has access to the escalation API.

The agent hallucinates a summary and sends a message to nobody.

Intent without harness is fiction.

What Harness Engineering Looks Like in Practice

Teams that ship agents to production share a pattern. They do not start with prompts. They start with a harness design document that answers:

- What events wake this agent?

- What tools does it need, and what does failure from each tool look like?

- Where does it store state between runs?

- What is the escalation path when it is blocked?

- What does a successful run look like, measurably?

- What does an unsafe run look like, and how is it stopped?

Only after answering these questions do they write the model instructions. The harness comes first because the harness defines the problem space the model must navigate.

The New Engineering Discipline

Martin Fowler published on harness engineering in early 2026. Red Hat followed with a structured workflow guide. The concept is moving from practitioner blogs to enterprise architecture conversations with the speed that "DevOps" moved from mailing list to org chart between 2009 and 2012.

The analogy holds: DevOps did not make developers better at writing code. It made code deployable. Harness engineering does not make models smarter. It makes agents deployable.

The engineers who understand this distinction will ship production agents. The ones still iterating on prompts will keep presenting demos.

Build the Physics First

The most reliable sign that a team is ready for production agentic AI is not the quality of their prompts. It is not even the model they chose. It is whether they have drawn a harness diagram and argued about it for an afternoon. Every constraint, every capability boundary, every failure mode mapped out before a single system message is written.

Agents do not fail because they are dumb. Agents fail because they are free-floating minds dropped into physics they cannot navigate. Build the physics first, and the intelligence will follow.