Why the Best AI Engineers in 2026 Are Obsessed With Failure

The best AI engineers in 2026 spend most of their time trying to break things.

Not testing in the traditional sense. Not writing unit tests or validating edge cases against a spec. The engineers producing the most reliable agentic systems today spend their days designing failure — cataloguing it, categorizing it, and building infrastructure that makes failure visible before it reaches production. They've accepted a counterintuitive truth that most teams resist: you cannot build a reliable AI agent by optimizing the happy path. You build one by becoming obsessed with every way it goes wrong.

Failure mode design is the core engineering skill of 2026.

What Nobody Tells You About Harness Engineering

If you've read anything about the discipline emerging from OpenAI and Anthropic's research labs, you've heard the pitch: harness engineering is about constraints, tools, feedback loops, and verification systems. Build the environment, not just the instructions. The agent is capable; the harness is where the work lives.

All of that is true. But it misses the cognitive shift that makes harness engineers effective.

The harness is not a scaffolding system you build once and hand to an agent. It's a living map of your agent's failure surface. Every constraint in a well-designed harness exists because an engineer sat down and asked: "What breaks here? How does it break? And when it breaks, what does the system need to know?" The constraint is downstream. The failure analysis is the work.

This is the insight the popular discourse skips: the harness is a crystallized record of failures you've already studied.

The Three Failure Surfaces Every Harness Must Address

Agentic systems fail in predictable categories. Experienced engineers build harnesses around all three.

- Context decay. Long-running agents lose track of their own goals. After dozens of tool calls and thousands of tokens of accumulated history, the model's attention drifts. The early constraints get diluted. The original objective gets paraphrased into something adjacent. A harness designed for context decay includes checkpoints that re-anchor the agent to its goal state, compression strategies that remove low-signal history without losing critical decisions, and verification probes that confirm the agent can still articulate what it's trying to do.

- Tool hallucination. Agents don't just hallucinate facts. They hallucinate tool calls — invoking functions with plausible but fabricated parameters, chaining calls in sequences that look syntactically valid but are semantically impossible, or calling deprecated interfaces they encountered in training data. A harness designed for tool hallucination includes strict schema validation, execution sandboxes that fail loudly and descriptively, and retry logic with state rollback so a bad tool call doesn't corrupt the workspace.

- Silent success. The most dangerous failure mode is the agent that completes its task and returns a confident result that is subtly wrong. No error thrown. No blocked status. Just a plausible output that diverges from intent in ways a downstream consumer won't catch. A harness designed for silent success includes evaluation agents, output diffs against a known-good baseline, and human-readable summaries of what changed and why — not just whether the task completed.

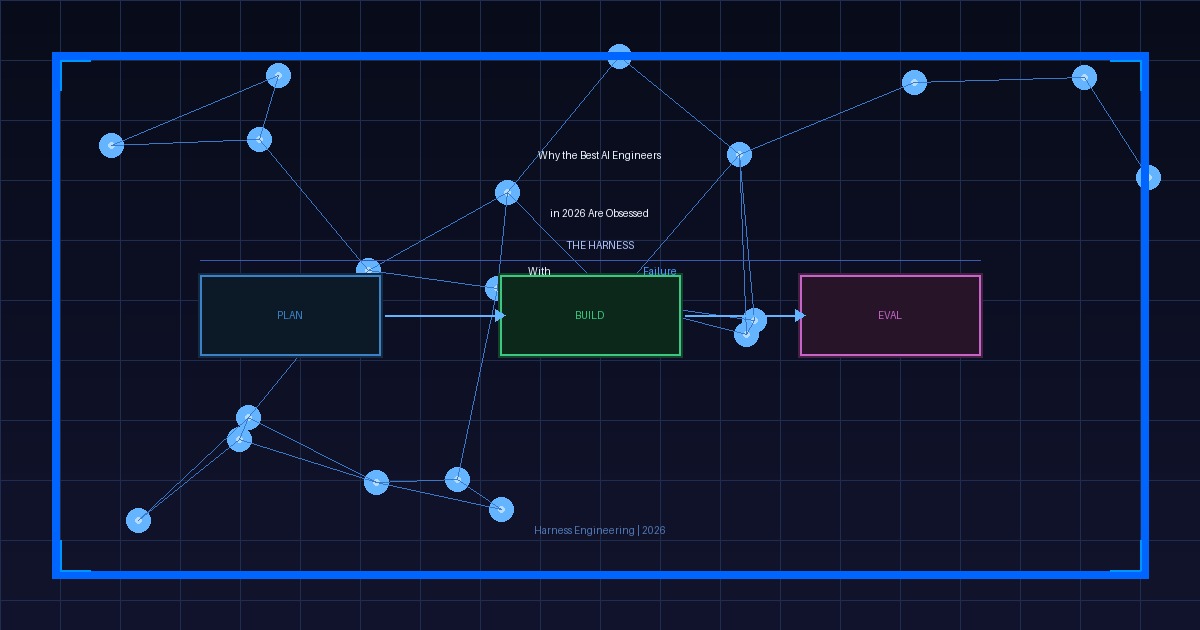

Anthropic's Three-Agent Design and What It Actually Solves

Anthropic's recently published three-agent harness — separating planning, generation, and evaluation into distinct agent roles — is not primarily an architecture for parallelism or cost optimization. It's an architecture for failure isolation.

When planning, generation, and evaluation share the same context window, failures compound silently. A flawed plan corrupts generation. A confident but wrong generation contaminates evaluation. The evaluation agent, shaped by the same context as the generation agent, tends to validate what was generated rather than challenge it.

Separation breaks that contamination path. The evaluation agent has no memory of the generation agent's reasoning. It sees only the artifact and the original objective. That structural distance is what makes the harness work — not the specialization of the agents, but the deliberate gaps between them.

- The gap between planning and generation forces a translation step that surfaces underspecified requirements.

- The gap between generation and evaluation prevents the "I remember how hard that was to write" bias from softening the critique.

- The gap between evaluation and the next iteration creates an audit trail — a record of what failed and why.

Every gap in a multi-agent system is a failure detection surface. Engineers who understand that design gaps with intention. Engineers who don't design workflows with efficiency.

The Constraint Paradox, Explained by Failure Mode Thinking

One finding from 2026's production deployments surprised teams that hadn't done the failure mode work upfront: adding constraints to an agent increases its output quality, even when those constraints limit the solution space.

The paradox dissolves once you understand what constraints actually do. A constraint is a preemptive response to a failure mode the harness engineer already identified. Linters, forbidden patterns, output schema requirements, forced checkpoints — these are not restrictions on agent creativity. They are the engineer encoding their knowledge of where this system will fail into the environment itself.

The agent that operates inside tight constraints doesn't produce worse work. It produces work in a space where the engineer has already removed the known failure modes. The remaining failures are novel, surfaced quickly, and become the next iteration of constraints.

That feedback loop is the harness.

What Changes About Your Daily Work

The practical shift is immediate and uncomfortable for engineers who've spent years measuring productivity by code written.

- Before touching a new agent workflow, spend time cataloguing what it will get wrong. Write down the top ten ways it fails. Be specific: not "the agent might misunderstand the task" but "the agent will interpret 'update the schema' as permission to drop columns rather than add them."

- Design your harness around those failure modes before writing a single prompt. What constraint prevents the column drop? What verification confirms the schema change was additive? What audit log captures the diff for human review?

- After each production incident, extract the failure mode, name it, and add a harness constraint that makes it impossible — or at minimum, loudly visible — in the next iteration.

- Treat your harness as a living document of organizational knowledge. The constraints it contains are the encoded lessons of every failure your team has studied. That is the asset, not the agent configuration.

The Engineer Who Breaks Things on Purpose

The discipline of harness engineering is, at its core, a discipline of structured pessimism. Not the kind that slows teams down with excessive caution, but the kind that makes systems durable by taking failure seriously as a design input. The engineers who've internalized this don't waste time tuning prompts for the happy path. They spend that time in the failure space — mapping it, naming it, building the infrastructure that catches it before it propagates.

The most reliable agentic systems in production today weren't built by engineers who were optimistic about what AI could do. They were built by engineers who were methodical about what AI would break.